Your Job Is Not at Risk. Your Justification Is.

Eleven years ago, Tim Urban published an article on Wait But Why that predicted exactly what is happening inside every company right now.

Millions of people read it. It went viral across LinkedIn, Twitter, dinner tables. Smart people forwarded it with notes like "fascinating" and "must-read."

Then they closed the tab and went back to work....

You might do the same with this one. It is longer than most things you read on LinkedIn. But the argument I am making is that closing the tab in 2015 is exactly why 2026 feels so sudden - and that doing it again is the most expensive decision you can make right now.

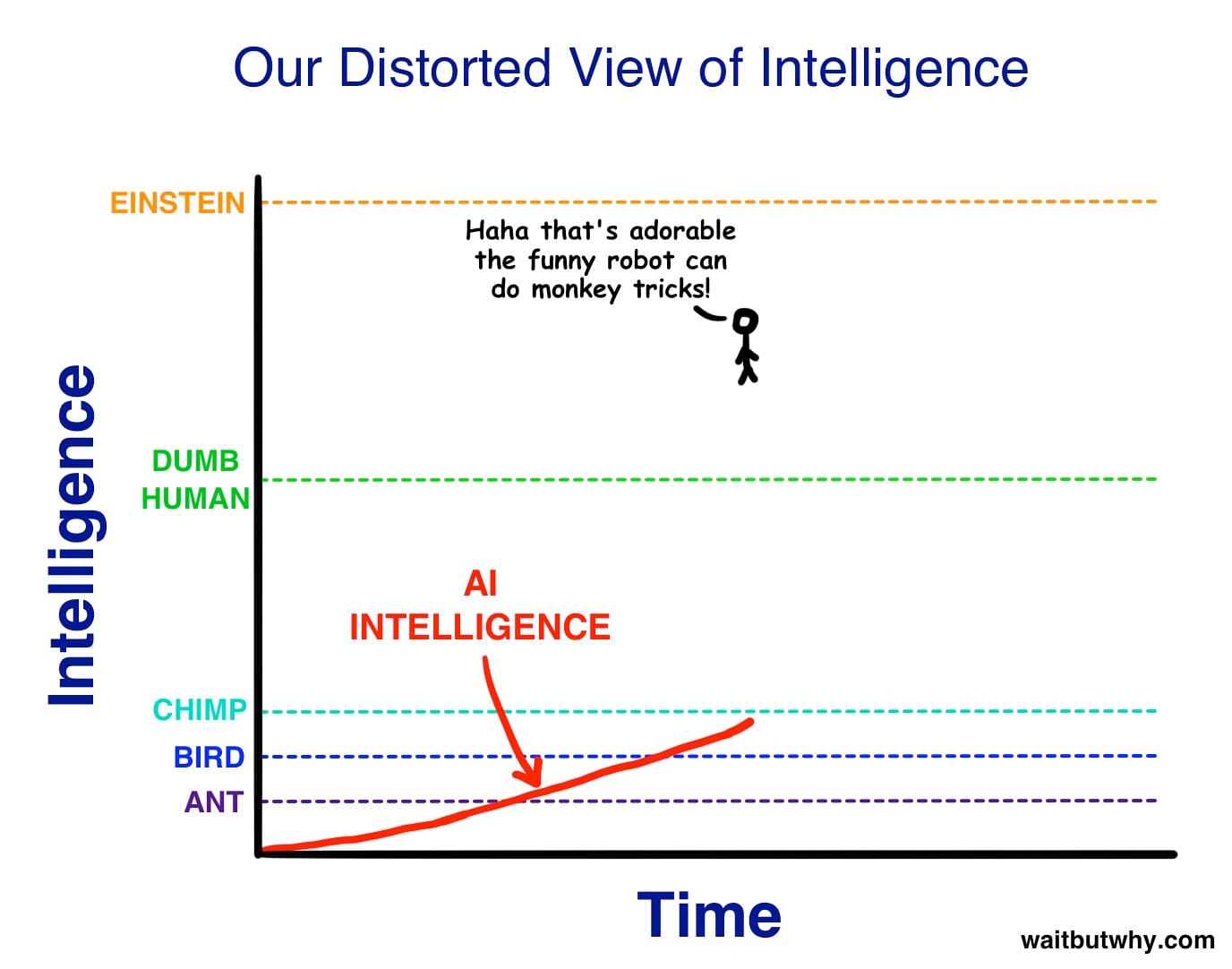

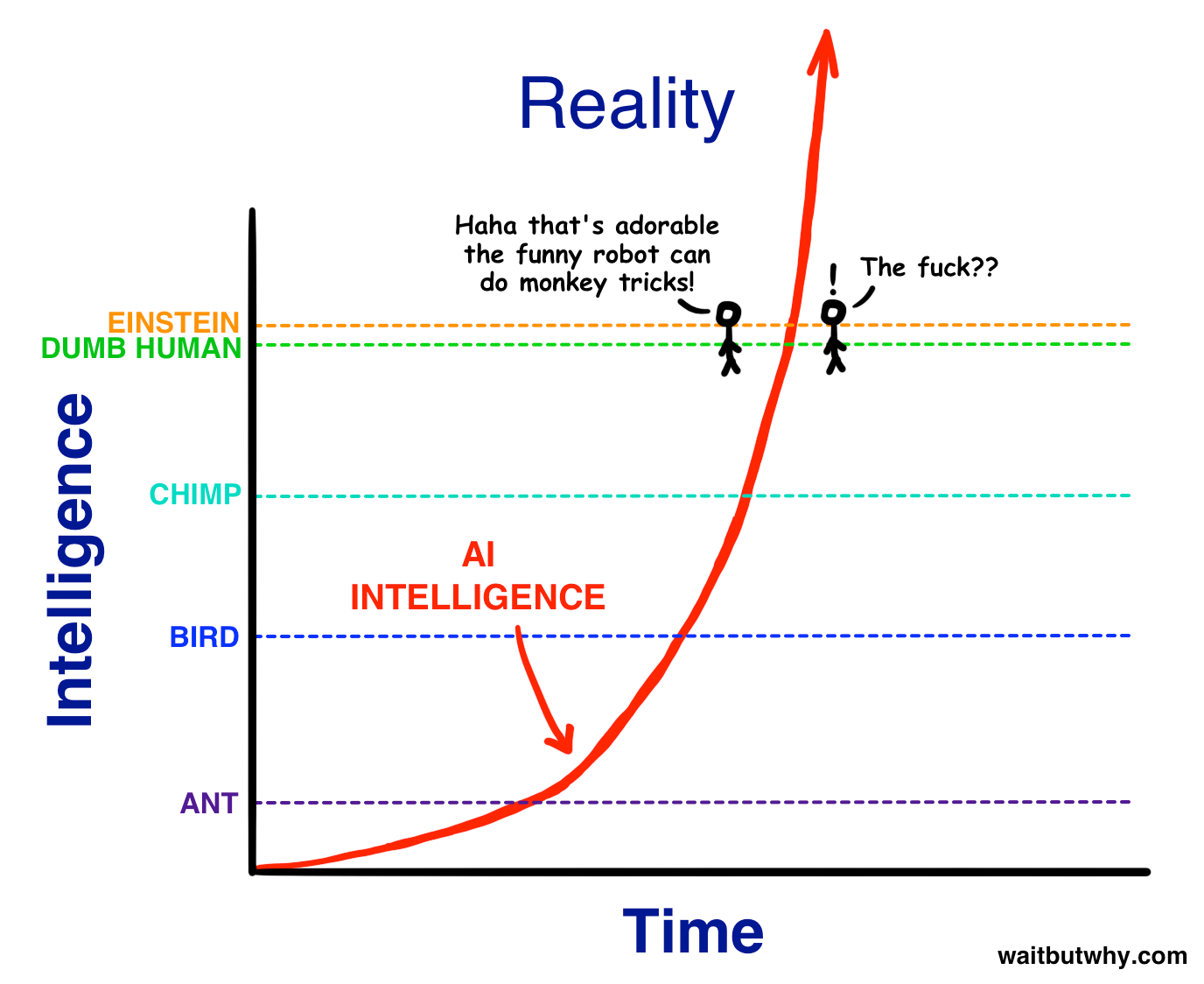

The article showed a simple chart. AI intelligence on the vertical axis, time on the horizontal. The curve starts below ant level. Climbs through bird, chimp. Approaches the "Dumb Hum an" line. A stick figure watches it arrive, arms crossed: "Haha, that's adorable, the funny robot can do monkey tricks."

Then the curve goes vertical. Crosses Dumb Human. Crosses Einstein. The same stick figure, one moment later: "The fuck??" - What's going on?

Here is what I want you to sit with: the curve Tim Urban drew in 2015 as a thought experiment is now a description of what actually happened. The "Haha" phase - ChatGPT writes emails, summarises documents, qualifies leads - that was 2022 and 2023.

It is not a prediction anymore. It is a schedule.

Two moments that changed how I think about this

The first was a number.

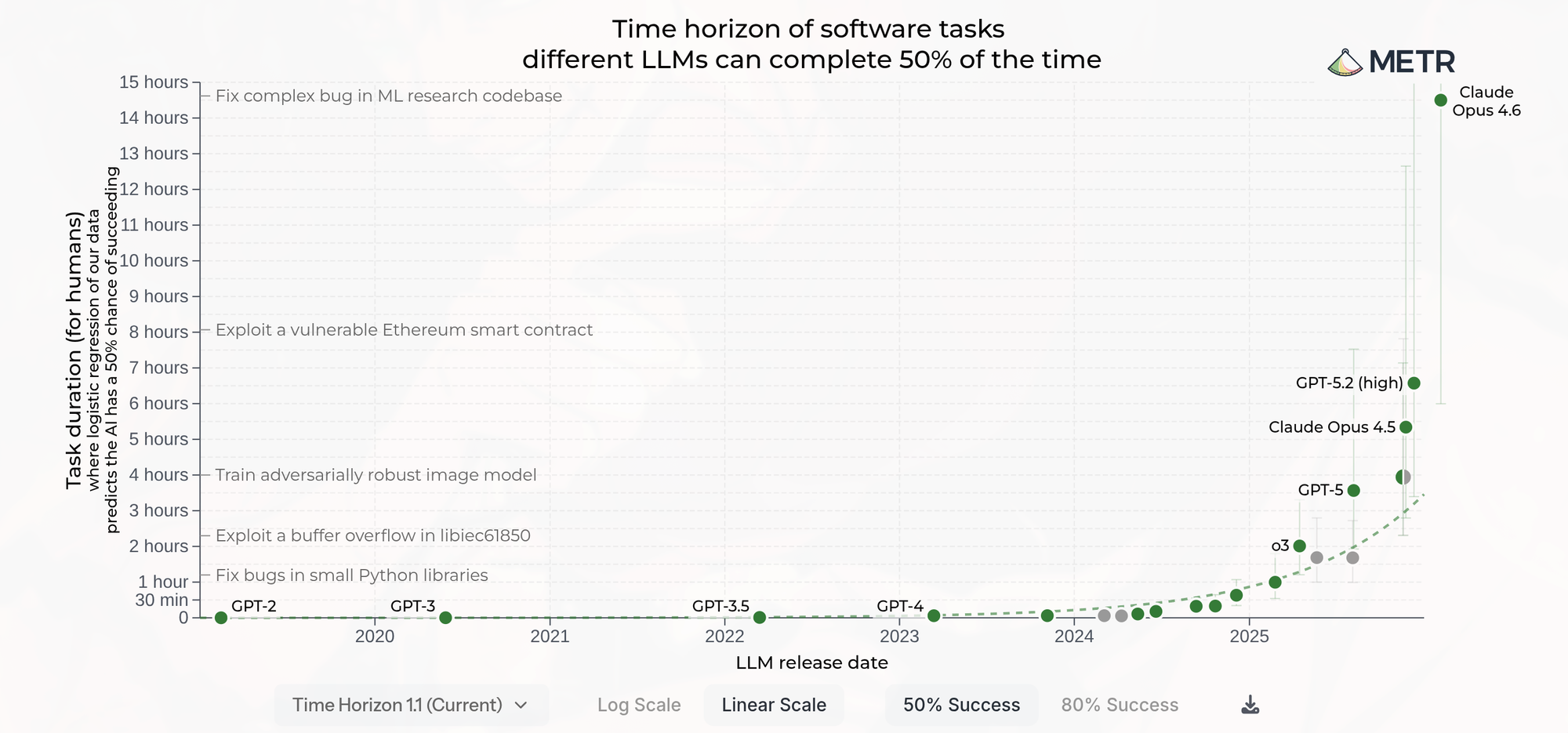

Earlier this year, a new version of Claude was released - Claude Opus 4.6. I was reading the technical measurements from METR, an independent nonprofit that evaluates AI capability in a specific and revealing way. Not exam scores or abstract benchmarks. They give AI agents complex, multi-step professional tasks - the kind drawn from software engineering, systems design, technical problem-solving - and measure difficulty in human time: how long it would take a skilled professional, with no prior context, to finish the same job.

In mid-2024, frontier models had time horizons measured in single-digit minutes. By early 2025, that had reached 15 to 30 minutes. By late 2025, nearly five hours. The doubling time across six years of data: approximately seven months.

Then Claude Opus 4.6 was measured. METR's estimate: a 50% time horizon of approximately 14.5 hours.

That is the task length at which the model succeeds roughly half the time. Not a summary. Not a draft. A genuinely complex, multi-step professional task - the kind that fills most of a knowledge worker's day. 14.5 hours. Longer than a standard working day.

Now look at that number on the curve above: that is the task length at which the model succeeds roughly half the time. Not a summary. Not a draft. A genuinely complex, multi-step professional task - the kind that fills most of a knowledge worker's day, 14.5 hours. Longer than a standard working day. Longer than you can stay awake.

To understand why that number matters, think about what it was before.

Two years ago, AI could reliably complete tasks that took a human expert around two minutes. Not hours - minutes. Simple, contained, low-stakes tasks. Then the number started moving. By mid-2024: around ten minutes. By early 2025: thirty minutes. By late 2025: nearly five hours. By early 2026: 14.5 hours.

That is not steady progress. That is the curve Tim Urban drew in 2015 becoming real. Every time you think the pace of change is fast, the next data point arrives and the previous one looks slow. The doubling time is approximately seven months. Which means the number will not stay at 14.5 hours for long.In mid-2024, the horizon was measured in single-digit minutes. By late 2025, nearly five hours. By early 2026, 14.5 hours. That is not linear progress. That is the exponential curve Tim Urban drew in 2015 - except this time it is not a thought experiment. It is a benchmark. And the doubling time is seven months.

The second moment was a workshop.

We were working through how the role of the real estate agent would change over the next three years. We discussed and mapped the tasks, the workflows, the client interactions. Rigorous work. And somewhere in the middle of that session, I felt something shift - not about agents, but about us. About every function inside the company.

The conversation was about "the external market". But the question it raised was internal: if we are redesigning how agents work around AI, why are we not asking the same question about how every person in the operations works?

That discomfort did not go away. It sharpened into a thesis.

The shift we are living through creates something most disruptions do not: a genuine upgrade in what it means to work. AI does not eliminate jobs. It eliminates the justification for doing them badly.

Everything that follows is an attempt to be precise about what that means - and what it makes possible.

What the data says - and what it does not

In March 2026, Anthropic published its labour market impacts research - the most methodologically careful study I have seen on how AI is affecting employment. The headline finding surprised most people: there is no systematic increase in unemployment for workers in the most AI-exposed occupations since late 2022.

The mass displacement has not happened yet.

But the same study finds something more important: the hiring rate for workers aged 22-25 in highly AI-exposed occupations has dropped 14% since ChatGPT launched. Not layoffs. Not-hiring. Quietly. Without announcement.

And here is the number that shapes everything: AI currently covers only 33% of tasks in the Computer and Mathematics category - despite 94% of those tasks being theoretically possible. There is a vast gap between what AI can do and what it is actually doing inside most organisations.

That gap is the window - and it is still open. BCG identifies the companies closing it deliberately as "future-built": the 5% capturing most of the economic value from AI while the other 95% run pilots. These companies expect twice the revenue impact and 40% greater cost reduction by 2028. That is not a marginal improvement. It is a structural gap that, once it opens, does not close.

It took eleven years to get from Tim Urban's article to a model with a 14.5-hour autonomous work horizon. It will not take eleven more years to get to the next inflection. The window is still open. But it is closing at a pace that 2015 Tim Urban would have drawn as nearly vertical.

The shift that affects every function - and the profile it demands

This is where most discussions about AI and organisations get it wrong. They focus on the external product - the agent, the chatbot, the customer interface. They miss the internal transformation, which is more fundamental and more urgent.

David Autor and Neil Thompson at MIT documented this in their 2025 paper "Expertise" across four decades of automation data. Their finding: when routine tasks in a function are automated, the remaining tasks require more expertise. The work that stays becomes more valuable. What remains is everything that required genuine human judgment in the first place.

This produces a specific structure inside every organisation. I think of it as three layers. The execution layer - the work that is repeatable, rule-based, and learnable - is moving to AI. The judgment layer - the work that requires context, accountability, and the ability to be wrong in a way that matters - becomes everything. And between them is the orchestration layer: the work of directing AI agents toward the right outcomes, managing exceptions, setting the boundaries within which automation operates, and taking responsibility for what comes out.

The organisations pulling away right now understand that the orchestration layer is not a technical role. It is a human role - and it is the most important new role in every function.

This shift demands a specific profile. In organisational design, it has a name: T-shaped. Deep expertise in one domain - the vertical bar of the T - combined with genuine understanding across multiple functions - the horizontal bar. Enough knowledge of engineering to judge an AI-generated architecture. Enough understanding of finance to challenge an AI-produced analysis. Enough marketing instinct to know whether an AI-written campaign is true to the brand.

The T-shaped person is not the person who knows the most. It is the person who can connect what they know to what needs to happen - and hold AI accountable for the gap in between. This is not a new hire profile. It is the direction every existing role is moving.

This is not a Sales phenomenon or a Technology phenomenon. It is happening simultaneously in every function.

In Finance, the work of compiling reports, reconciling data, and producing first-draft analyses is going to AI. What remains is the judgment: which number actually matters, what it means for the decision, and how to communicate it to someone who needs to act.

In Marketing, the work of producing content, managing campaigns, and tracking performance is going to AI. What remains is the judgment: what the brand stands for, which audience deserves attention, and whether the message is true.

In Engineering, the trajectory is the most visible. When Claude Code launched in early 2025, it wrote 20% of code for Boris Cherny, head of the product at Anthropic. By May, 30%. By November, 100%. He has not manually edited a single line since. Company-wide at Anthropic, between 70 and 90% of code is now AI-generated. At Microsoft and Google, the figure is around 30% - impressive until you see what the next nine months look like on that curve. Anthropic's CPO Mike Krieger describes what changes when AI writes most of the code: the bottleneck moves upstream to deciding what to build, and downstream to reviewing what ships. Coding itself is no longer the job. Judgment about what the code should do - and whether it should exist at all - is.

The question every organisation needs to ask is not "how do we use AI to do our current work better?" That is the question of the 95%. The question of the 5% is more interesting: "if AI handled every step before the judgment call, what would our people actually do - and are they doing that now?"

What this looks like in one function

Take inside sales. Until recently, qualifying a lead meant a human being reading context, making a call, logging notes, sending a follow-up, and checking back three days later. The execution layer - reading, logging, scheduling, chasing - consumed around 70% of the time. The judgment call was 10 minutes at the end of a 45-minute process.

With AI handling the execution layer, that ratio inverts entirely. The AI agent reads the lead, tracks behavioural signals across every touchpoint - how many times did this person return to the valuation tool, did they read the market report or close it immediately, did they request a callback or wait to be contacted - and builds a live picture of where they actually are in their decision process. Not where they say they are. Where their behaviour says they are.

The inside sales person operating at the judgment layer does not simply book a time slot. They take what the AI agent has assembled, add their own read of the situation, and hand the agent - the human one, the person who will sit across from this client - a person, not a name. With context. With a clear view of what this client actually needs to hear. Are they genuinely ready to sell, or gathering information for a decision six months from now? Are they uncertain about price, or uncertain about the process? What would make this conversation valuable for them rather than just efficient for the organisation?

That handoff - from AI agent to inside sales person to real estate agent - is the Stack in action. Three layers. Each doing what only it can do.

That is not a productivity improvement. That is a different job. And it is a significantly better one.

Every function in the company faces the same redesign. Inside sales is one illustration. The question is the same whether you work in Finance, Marketing, Engineering, HR, Backoffice or overall Operations: what is the judgment call in your role - and are you spending most of your time on it, or on the work that should already be automated?

What I changed about my own work

I want to be specific about what this meant for me personally - because concrete examples are more useful than general advice.

Over the past weeks, I built what I now think of as a CEO intelligence system. It pulls automatically from my emails, calendar, Google Drive, meeting notes, and project management tools - and produces a daily outlook, a weekly review, and a weekly forward view. It tracks meeting outcomes, open tasks (some even automated) and flags patterns in how time is actually being spent versus how it should be spent.

The first thing it showed me was uncomfortable: a significant portion of my week was occupied by meetings that were not moving anything forward. Not because the people in them were not good. Because the decisions that justified those meetings were either already made, or could have been made without a meeting at all.

I cut them. Not all of them - but enough that the shape of my week changed.

What replaced them was not more meetings. It was the work I had been deferring: longer strategic conversations, decisions I had been avoiding because I never had the right context assembled at the right moment, relationships I had been managing reactively instead of intentionally.

The system did not make me smarter. It made visible what was already true - that a large portion of what felt like leadership was processing. And AI processes faster and more consistently than I do.

What I do now that I did not do enough before: I focus almost entirely on the things that actually move the needle. Not because I suddenly have more discipline. Because the system shows me, every morning, what those things are - and what is getting in the way.

I am not sharing this as a success story. The transition was disorienting. Moving into the judgment layer requires accepting that a significant portion of what felt like valuable work was, in fact, work that should have been automated earlier. That is not a comfortable realisation for a CEO - or for anyone.

But it is an honest one.

I used to think I was doing leadership. I was doing processing.

What this asks of us - and what it makes possible

I want to start with what I genuinely believe: AI represents the biggest structural shift in how organisations work since the internet - and possibly larger, because the internet changed how we access information, while AI changes how we do the work itself. Not despite the disruption - because of it.

We have spent six years building a platform around a thesis: that the future of real estate platform brokerage is an integrated system where AI handles the intelligence layer and humans handle the judgment layer. Every component we have built - PropertiOS, our valuation engine, our lead intelligence platform - was designed toward exactly the moment we are now entering. The technology has not arrived ahead of us. We built toward it.

That is an unusual position. And I do not take it for granted.

I also want to be honest about what I see in the people around me. Six years of navigating a pandemic, several wars, inflation cycles, and the most challenging Swiss real estate market in a decade creates a specific kind of tiredness. Not weakness - tiredness. There is a difference. Research from Emergn surveying more than 750 global organisations found that nearly half of employees are experiencing transformation fatigue right now - and 52% attribute it specifically to AI. I believe that number. I feel it sometimes myself.

And I want to be honest about something harder: not everyone will make this transition at the same pace, and as a CEO, that is the part I find genuinely difficult.

The organisations that navigate this well are the ones that have done hard things before - and choose to move again. Not because the tiredness is not real. But because this particular shift is different from every previous disruption - and the evidence on what good change looks like is clear. Klarna learned this the hard way: after replacing 700 customer service agents with AI, customer satisfaction collapsed and they quietly rehired. The lesson was not that AI failed. It was that removing people without redesigning the work around them breaks the system customers rely on. The fatigue does not come from transformation. It comes from organisations that accelerate task volume without redesigning what work actually is.

The version of this I am building at properti is different: change that frees, not change that accumulates. We are investing in making this transition navigable - through structured conversations, through building the tools and organization internally that make AI-native work accessible to everyone on the team, and through being honest when something is not working.

For any organisation navigating this, three things matter. First: look at your own work this week with curiosity, not judgment - which parts of what you do today could AI handle, and what would you do with that time? The answer is almost always more interesting than the question suggests. Second: have the conversations. With managers, with teams, across functions. The companies that navigate this well do not do it through announcements. They do it through a thousand small conversations where people figure out together what changes and what stays. Third: understand that moving faster than the market is not a corporate slogan. It is the clearest advantage we have - and it belongs to everyone in an organisation, not just leadership.

The people who will define this next chapter

High-potential people with genuine hunger for what comes next will have more opportunity than any generation before them. IBM's decision to triple entry-level hiring in the US in 2026 confirms this - it is a deliberate bet that people who arrive already fluent in AI-native workflows, who are ready to operate at the judgment and orchestration layers from day one, are more valuable than experienced people who resist changing how they work.

The WEF Future of Jobs 2025 report projects 170 million new roles created alongside 92 million displaced. The people who fill those new roles will not arrive with the right job title. They will arrive with the right orientation: curiosity, adaptability, and the willingness to treat AI as the highest-leverage opportunity they have ever been given.

The honest counterpart to this opportunity: performance that relies primarily on task execution AI already does better will face real pressure. Not as punishment - as a structural reality that has accompanied every major technological shift in history. The difference this time is that the transition window is visible, the direction is clear, and the tools to make the shift are already here.

The decision that separates the 5% from the rest

The companies that end up in the 5% and the ones that do not will not be separated by technology. They will be separated by one decision, made now or made too late: did they redesign every function around the judgment and orchestration layers, or did they keep optimising the execution layer until it was automated underneath them?

I believe properti will be in the 5%. Not because of hope - because of what we have already built, because this team has proven repeatedly that it moves faster than the market expects, and because the window is still open for those who choose to move through it deliberately.

The opportunity is real. It is in front of us right now. And it will not wait for us to feel ready. The companies in the 5% did not get there because they were certain. They got there because they moved while others were still deciding.

The tab is open.

The question is what you do next.

This is the third article in a series on AI and the future of real estate and work. The first piece examined which parts of real estate survive the autopilot. The second looked at what agents will actually do in 2030.